Nicola Jane Buttigieg introduces her ‘Beatbox Notation Loop Builder’ - which explores alternative ways to approach musical notation.

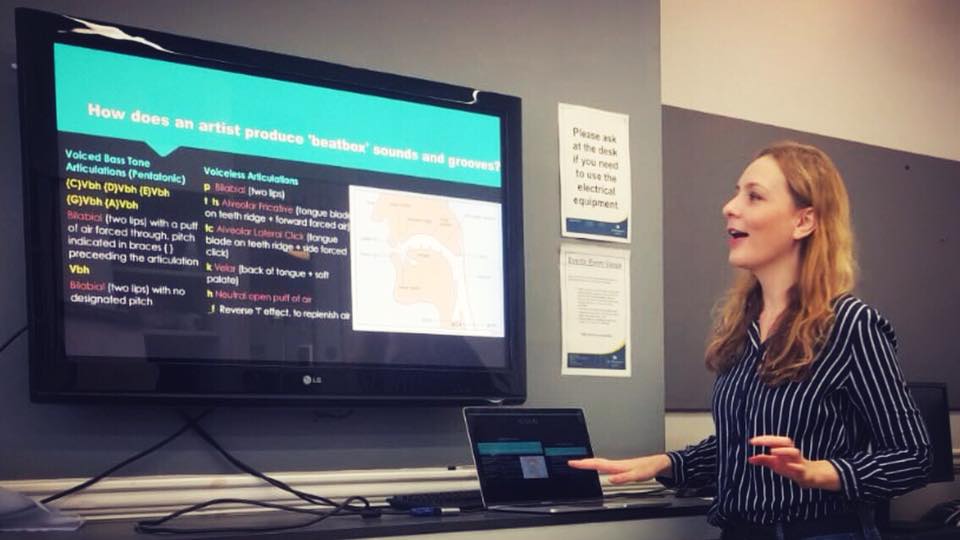

Nicola at Victoria Music Library as a part of the 2018 Fun Palaces Community Campaign.

Prompted by my ongoing curiosity for the future of unplugged, human-read musical notation, and also for the ownership and credibility of composers’ digitally produced music, I was eager to anticipate the method by which musical compositions of any description might be accurately recorded in written form. Furthermore, I considered how this written form might be transcribed and catalogued into digital, printable documents to accompany matching audio recordings.

Whilst it seemed a logical first step in this method might be to determine a universal approach to marking effective ‘sound representations’ of any description, I came to the conclusion that a more refined, manageable approach might be to work via a ‘Rosetta Stone’-style mode of abstraction. In due course, a universal language for ‘sound production’ might then be revealed.

Engaged in this approach, I made a prediction that from this newly proposed universal language, AI or humans alike might be able to transfer ideas of any possible sound description, rooted in either sonic-acoustic or sonic-digital mediums. Therefore, the form of this universal language must hold the ability to be handwritten and printable but also to exist in binary-compilable form (so to be understood by ever-evolving computer applications). The most immediately obvious and logical suggestion would be for the language to evolve via patterns of standard keyboard characters, and thus via a version of typed ‘source code’.

Realistically, what use might this source code be in its purest form, to any standard musician – either DAW (Digital Audio Workstation) or traditionally skilled? There would need to be another easily readable, stand-alone notation format from which this typed, more complicated source code could be derived. This would allow a skilled musician of either above discipline to be able to ‘train’ to read the simpler format, solely for the purpose of communicating with musicians of the other existing notation discipline. The short-hand format would ideally be handwritten with ease or via typed-shortcut characters. This would allow a traditional conductor to be able to synchronise digitally inspired sound components into a hybrid digital-acoustic ensemble performance. Put even more simply in the context of the a cappella vocal ensemble: including an evolved shorthand text-notation staff onto the traditional style choral score would facilitate the accurate inclusion of beatbox performer/co-writer segments for effective, synchronised rehearsal.

[[{"fid":"30433","view_mode":"default","type":"media","field_deltas":{"7":{}},"link_text":null,"fields":{},"attributes":{"height":253,"width":620,"class":"media-element file-default","data-delta":"7"}}]]

An example of the notation explained in colour

Two things have become clear via the rise in popularity of beatbox composition and vocal percussion additions to a cappella ensemble arrangement, mimicking (often remarkably effectively) computed sound design arrangements. Firstly, it could be argued that the human voice is by far the most easily accessible, sophisticated and malleable instrument of all, coming in many varieties of vocal timbre, and affected by just as many varieties of facial cavity and shape - making the possibilities of sound texture highly diverse. Secondly, it is essential that digital, frequency-measured sound is always communicated in written form via binary-compilable means: a system equally recognised by humans and computers. Written notation’s ability to accurately record the infinite number of electronically produced timbre manipulations in music, as well as being able to evolve to accommodate the constantly developing musical production technologies and genres – is essential.

Therefore, for a core, computer-readable ‘source-code’ to be adopted by all existing professional musicians, the simpler shorthand language from which the code might be derived would likely need to evolve from both our existing IPA phonetic speech library, traditional rhythm and pitch aspects taken from the Western music notation system, and also current DAW notation systems, including their scope for plugin manipulation.

My fascination with beatbox in the capacity of ensemble music arrangements comes following years of practical community experimentation investigating avoidance of latency in combined electro-acoustic performance. A predicted reality might be that future ensembles of skilled, miced beatbox artists could indeed be able to replace the need for any ineffective, digital track playback during combined electro-acoustic performance. Providing the correct mic filter applications and making these measurements universally representable on paper within an evolved paper score, means the electronic sound components of a hybrid composition might be successfully mimik-performed and filter processed in live sync via an ensemble of professional beatbox artists.

With the aim of investigating an open, public response to this above concept, a series of workshops in local Westminster community venues was held last summer, and I was then able to bring the newly-trialled idea into a formal high school education setting. Formal weekly workshop sessions were approved as part of the enrichment programme at St. Benedict's School in West London, in order to monitor the progress of the new notational learning tool for young beginners of beatbox technique. The goal was for young, non-traditional notation readers to learn the skill of beatbox production/trail notation reading, as well as collaborate using the written notation technique.

See this in action below:

A prototype set of predictable character symbols (to represent digitally-inspired percussive sounds) were coached, and delivered by the manipulation of lips, tongue, soft palate and muscles of the lower jaw, in conjunction with the teeth and hard palate. In addition, there was variation recognised for voiced and unvoiced versions of many of these above obstructions. It was strongly pointed out by the students that, when voiced, there was the possibility of producing any frequency within the human-capable sound spectrum, not just those that make up the tonal system represented on the established notation staff.

During the sessions we frequently referred to the International Phonetic Alphabet (IPA) that covers articulations for all existing languages in the world (most recently updated in 2015), as well as the Frequency Sound Spectrum used in digital music DAWs, to aid our understanding of sound textures and qualities. All agreed that to have these ideas held in a single, accurately written form could perhaps mean greater compositional power overall.

In realising a first prototype browser-based tool by which to facilitate practice of the above, I have built the beginnings of an incorporated ‘Beatbox Notation Loop Builder’, which I hope will do justice for development of the new written language approach. It has also been designed to assist in demonstrating a foundation for the overall integrated ‘beatbox-standard music score’ concept. It currently works best in a Google Chrome browser:

http://beatboxcodenotation.com/index.html

[[{"fid":"30434","view_mode":"default","type":"media","field_deltas":{"4":{}},"link_text":null,"fields":{},"attributes":{"height":232,"width":620,"class":"media-element file-default","data-delta":"4"}}]]

The Beatbox Notation Loop Builder in action

More details and examples of test notations may be found on the site alongside the prototype loop builder at: http://beatboxcodenotation.com/HowItWorks.html

By Nicola Jane Buttigieg

[[{"fid":"30437","view_mode":"default","type":"media","field_deltas":{"6":{}},"link_text":null,"fields":{},"attributes":{"height":413,"width":620,"style":"height: 233px; width: 350px;","class":"media-element file-default","data-delta":"6"}}]]

Nicola is currently completing the MSc Computer Science programme at the University of Hertfordshire, following formal training in sound engineering and digital orchestration via Berklee College of Music. She has digitally scored numerous short film projects, released original tracks for online streaming, scored and orchestrated six live stage musicals and delivered two West End musical theatre showcases of original material, sponsored by Really Useful Group Theatres.

If you’d like to support Sound and Music’s work to champion new music and the work of all British composers and creators you can do so here.